Bumps in the road: The ultimate self-driving machine isn't here yet

Many of us want driverless cars. For consumers, they promise a safer, cheaper and stress-free form of transport. For city planners, they are a chance to make more efficient use of road space, and to clear car parks out of city centres. For companies like Google and Tesla, they are a chance to make money.

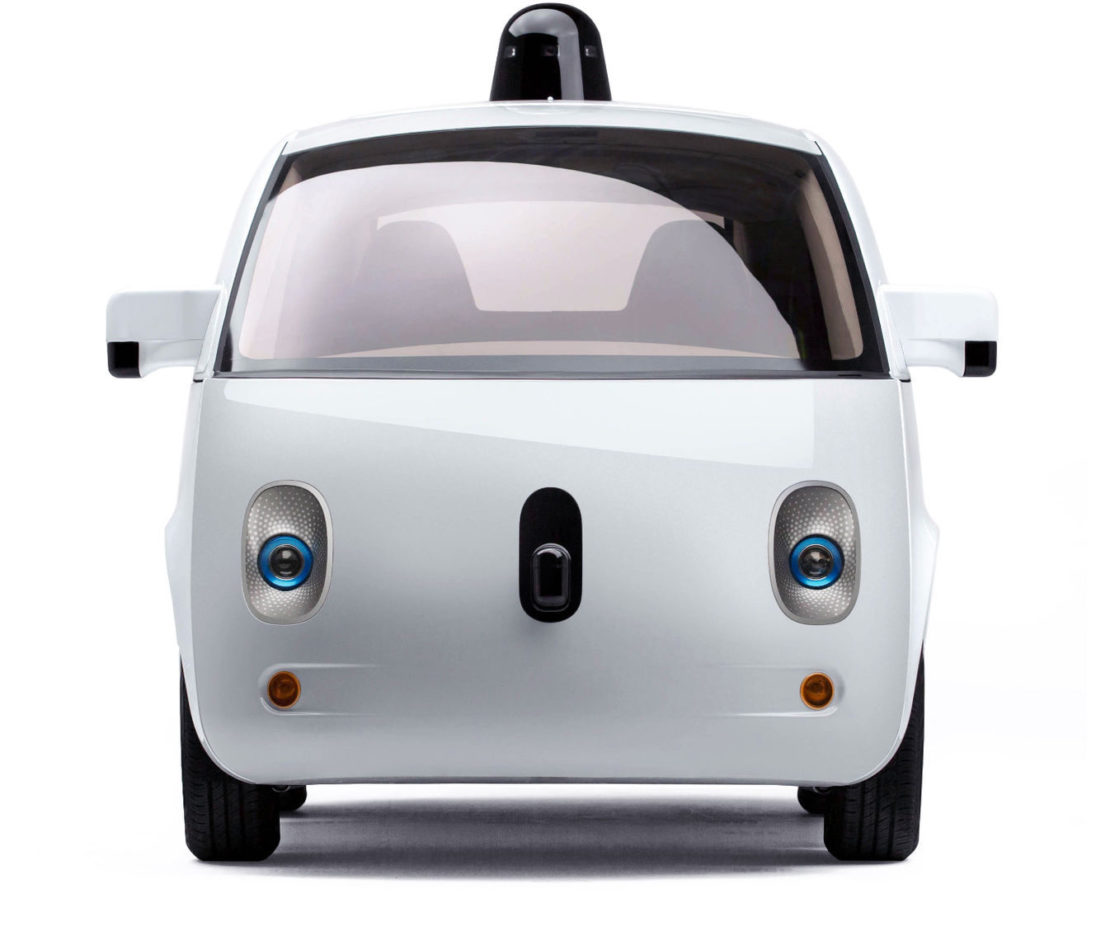

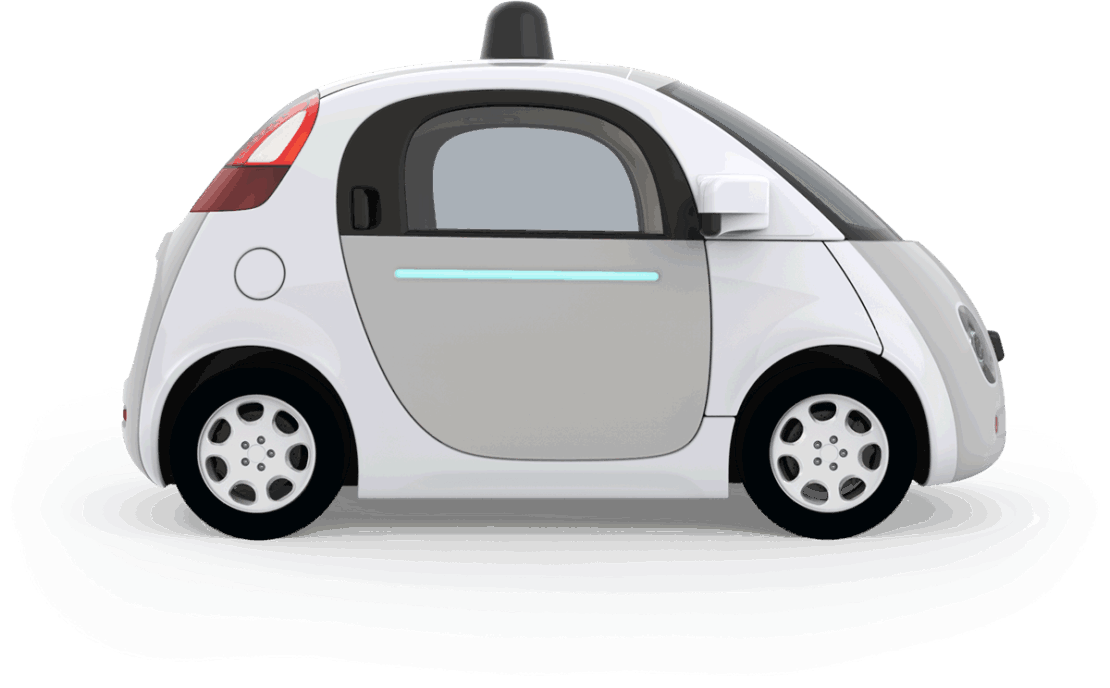

For the more excitable parts of the tech press, they are just around the corner. Maybe even already with us: Google's prototypes are driving freely on the roads of California and luxury carmakers now fit autopilots that can handle motorway driving in their new cars.

For all that I want this future to happen, I am convinced that the road to get there will be longer and less smooth than we're being led to believe. The recent news of the first fatal crash implicating a car's autopilot – for being unable to discern a white truck from a white sky – only reinforces that view.

There's nothing inevitable about self-driving cars

New technology does not inevitably lead to the social, economic or regulatory change it often needs to become widespread, nor does demand magically result in innovation. Fully automated metro trains have been running in Lille since before I was born, but are still niche. I am willing to bet that train drivers will still be around long after I'm gone: the technical challenges in replacing them all with computers are tough, and the demand is too soft. There's nothing inevitable about self-driving cars either.

So if my daily sauna-commute on London's Central line is to be replaced by the cool and comfortable air-conditioned car I want, there are some serious issues the carmakers will need to grapple with. (They are probably well aware of these already, but the tech press doesn't seem to be.)

Complexity begets complexity

The challenges involved in making fully autonomous vehicles actually work are enormous. Current prototypes only work when everything goes right. They can't really cope with roadworks or temporary lights. They can't recognise policemen. Most significantly, they can't drive routes that haven't been meticulously 3D mapped in advance.

Most read this month

Fiction: Divided we stand, by Tim Maughan How Scotland is tackling the democratic deficit The Long + Short has ceased publishingGoogle's autonomous cars rely on extremely detailed maps of the roads they drive. The fact the company's prototypes are driving on public roads is because an army of mappers and software engineers in tow is making it possible. So while they lack a human driver, they don't yet operate without human help. These maps need to be perfectly up-to-date if nothing is to go wrong – and the challenge of extending these from neatly circumscribed areas in California to every road in the world, and then maintaining them, is daunting. Modern, searchable computer maps are a wonderful thing, but they do not capture every detail correctly – as my satnav's occasional suggestions of shortcuts through rutted tracks attest.

Driving is full of unpredictable and bizarre situations, from trackstanding cyclists to telling the difference between a stray newspaper and live animal – not to mention the weather. Fully autonomous vehicles, ones that can drive even without a person on board, will have to be able to cope with everything the road can throw at them. If you want a car to be fully autonomous, it's not enough to make it able to guide itself through 99 or even 99.99 per cent of circumstances.

Sign up to our newsletter

The vision put forward by driverless car grandees, such as Elon Musk, is that the software is constantly improving – the only way is up. Because each car's software is updated automatically over the cloud, and each mile driven contributes to the AI's overall knowledge and refines its algorithms, the improvements will be almost exponential. The AI has more driving experience than any human could ever encounter, and only gets better. But that's not necessarily the case – reality may just be too complex in some cases.

The apparently small gap between the 'nearly driverless car' we have now and a 'fully driverless car' is actually very big, because the last few hundredths of a per cent of situations will be the most bizarre and cause the greatest increase in complexity. Everest base camp is more than halfway to the summit, but it's the last few hundred metres in the 'death zone' that are the toughest to climb.

To solve these problems you need to add complexity: more lines of computer code, extra sensors, additional levels of redundancy. The trouble is that complex systems aren't just difficult because they are big and unwieldy, but because the complexity itself causes problems.

Self-driving cars won't be accepted unless they are manifestly safer and more useful than traditional vehicles

Charles Perrow's brilliant study of accidents explains how complex systems can cause accidents. As you add complexity, you add probability that something will go wrong (as there are more systems that can break down), and also add probability that there will be unforeseen interactions between systems (as there are more connections between them). Dangerous situations can emerge from the complexity of the system itself. Even adding safety mechanisms can make things worse.

There isn't a simple fix to this problem; just a long, hard slog to make each component of the system safer, more reliable, more predictable. I suspect this means human drivers sticking around for longer than people think, even if the progress in self-driving tech means they will have less and less to do.

We've lost control

The next challenge is one of control. People get far more flustered by the fear of flying, food additives and childhood vaccines than they do by driving, smoking or over-the-counter medication.

In all of these cases, the issue is that taking a risk is different to being subjected to one, not that the public haven't understood the magnitude of the risk. A risk you haven't volunteered for, which you can't control, or which you don't understand is a lot more frightening. Air travel, processed food and vaccines are tolerated because they are genuinely very safe, with sources of risk ruthlessly weeded out. They are also very useful.

Self-driving cars won't be accepted unless they are manifestly safer and more useful than traditional vehicles. The carmakers have an opportunity here to have a proper process of engagement with the public. And that goes both ways: yes, they will need to educate the public about the risks. But they will also have to learn from how the public perceive risks – and hold themselves to significantly tougher standards than we, as a society, currently hold cars to. We will all gain from this.

Some foolhardy predictions

With the complexity of the task turning out to be a huge challenge, the technology for fully autonomous vehicles will take longer to mature than expected. Autonomous driving on motorways is coming, maybe as soon as the hype says. Cities with a grid layout (and enough money to merit the investment) might get full autonomy before too long. Chaotic, messy cities like London – let alone Mumbai – will have to wait for years. And remote country lanes? I suspect some will never be open to driverless cars. So the requirement for a human driver to be present for many – even most – trips will be with us for a long time.

In the short term, Tesla will patch their software so that the autopilot can only engage if the driver's hands stay on the wheel. (Mercedes-Benz already does this.) Drivers will be more in control, but the industry will have taken a small step back from fully autonomous driving.

Accidents like the Tesla crash will continue to cause consternation, even while routine car crashes (

In the longer term, even if they solve the technical challenges, self-driving cars will only take off if car makers learn the lessons of the airline industry: when your customers aren't in control, you have to keep them very safe indeed.